Introduction:

In the vast ecosystem of the internet, websites rely on various tools and strategies to ensure their content is visible to the right audience while maintaining privacy and security. One such tool, often overlooked yet crucial, is the robots.txt file. In this blog, we'll delve into the world of robots.txt, exploring its purpose, structure, best practices, and its impact on SEO.

Understanding Robots.txt:

Robots.txt is a text file placed on a website's server that instructs web robots, also known as crawlers or spiders, on how to interact with the site. These robots are employed by search engines like Google, Bing, and others to crawl and index web pages, making them searchable to users.

Purpose of Robots.txt:

The primary purpose of robots.txt is to control which parts of a website are accessible to search engine crawlers and which parts should be excluded from indexing. By specifying directives in the robots.txt file, website owners can manage how their content appears in search engine results pages (SERPs).

Structure of Robots.txt:

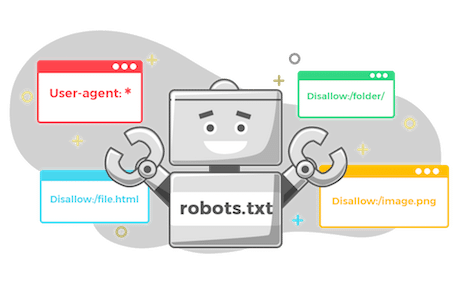

A typical robots.txt file consists of one or more directives that specify the behavior of web robots. The basic structure includes:

- User-agent: This directive specifies the web crawler to which the following rules apply. The wildcard "*" can be used to target all crawlers.

- Disallow: This directive tells the specified user agent which directories or pages to avoid crawling. For example:

Best Practices for Robots.txt:

When creating or modifying a robots.txt file, consider the following best practices:

- Be specific: Use precise directives to control crawler access to different parts of your site.

- Test your directives: Use Google's Robots Testing Tool or similar tools to ensure your robots.txt file is correctly configured.

- Regular updates: Update your robots.txt file as needed, especially when adding new content or restructuring your website.

- Avoid blocking important content: Be cautious not to accidentally block important pages or resources from being crawled and indexed.

Impact on SEO:

A well-optimized robots.txt file can positively impact your website's SEO performance by:

- Directing crawlers to prioritize indexing of high-value pages.

- Preventing indexing of duplicate content or sensitive information.

- Improving crawl efficiency and resource allocation.

Conclusion:

In the realm of website management and SEO, the robots.txt file plays a vital role in controlling how search engine crawlers interact with your site. By understanding its purpose, following best practices, and regularly optimizing your robots.txt file, you can enhance your website's visibility, ensure efficient crawlability, and ultimately improve your search engine rankings.

Comments

Post a Comment